上传文件至 kubernetes-MD

This commit is contained in:

parent

6b1bd30fc7

commit

72c9baf96f

|

|

@ -0,0 +1,807 @@

|

|||

<h1><center>利用kubernetes部署微服务项目</center></h1>

|

||||

|

||||

|

||||

|

||||

------

|

||||

|

||||

## 一:环境准备

|

||||

|

||||

#### 1.kubernetes集群环境

|

||||

|

||||

集群环境检查

|

||||

|

||||

```shell

|

||||

[root@master ~]# kubectl get node

|

||||

NAME STATUS ROLES AGE VERSION

|

||||

master Ready control-plane,master 11d v1.23.1

|

||||

node-1 Ready <none> 11d v1.23.1

|

||||

node-2 Ready <none> 11d v1.23.1

|

||||

node-3 Ready <none> 11d v1.23.1

|

||||

```

|

||||

|

||||

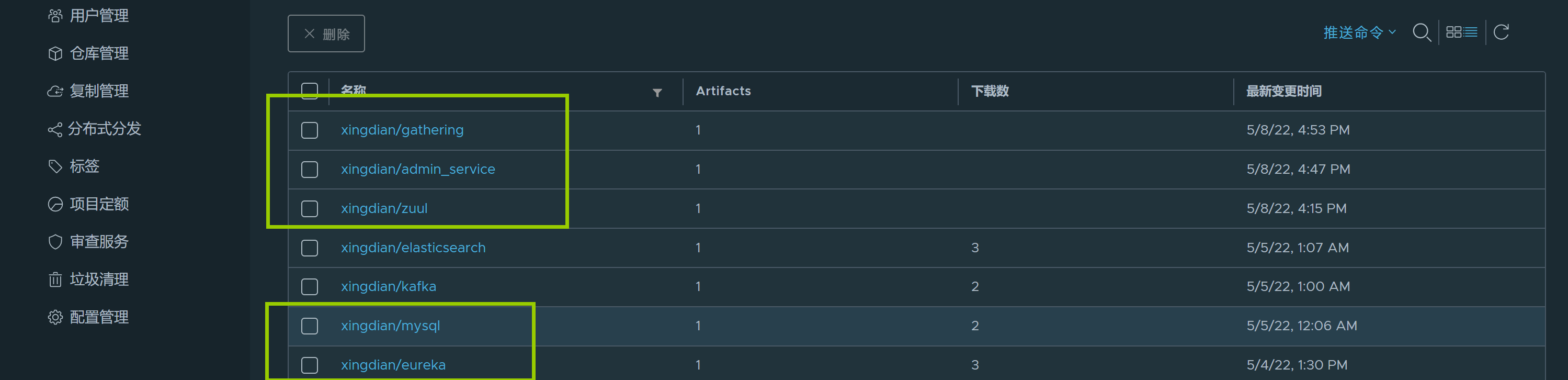

#### 2.harbor环境

|

||||

|

||||

harbor环境检查

|

||||

|

||||

<img src="https://xingdian-image.oss-cn-beijing.aliyuncs.com/xingdian-image/image-20220508222722564.png" alt="image-20220508222722564" style="zoom:50%;" />

|

||||

|

||||

## 二:项目准备

|

||||

|

||||

#### 1.项目包

|

||||

|

||||

|

||||

|

||||

#### 2.项目端口准备

|

||||

|

||||

| 服务 | 内部端口 | 外部端口 |

|

||||

| :---------------------: | :------: | -------- |

|

||||

| tensquare_eureka_server | 10086 | 30020 |

|

||||

| tensquare_zuul | 10020 | 30021 |

|

||||

| tensquare_admin_service | 9001 | 30024 |

|

||||

| tensquare_gathering | 9002 | 30022 |

|

||||

| mysql | 3306 | 30023 |

|

||||

|

||||

|

||||

|

||||

## 三:项目部署

|

||||

|

||||

#### 1.eureka部署

|

||||

|

||||

application.yml文件修改

|

||||

|

||||

```

|

||||

spring:

|

||||

application:

|

||||

name: EUREKA-HA

|

||||

|

||||

---

|

||||

#单机配置

|

||||

server:

|

||||

port: 10086

|

||||

|

||||

eureka:

|

||||

instance:

|

||||

hostname: localhost

|

||||

client:

|

||||

register-with-eureka: false

|

||||

fetch-registry: false

|

||||

service-url:

|

||||

defaultZone: http://${eureka.instance.hostname}:${server.port}/eureka/

|

||||

#defaultZone: http://pod主机名称.service名称:端口/eureka/

|

||||

```

|

||||

|

||||

Dockerfile创建:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor jdk]# ls

|

||||

Dockerfile tensquare_eureka_server-1.0-SNAPSHOT.jar jdk-8u211-linux-x64.tar.gz

|

||||

[root@nfs-harbor jdk]# cat Dockerfile

|

||||

FROM xingdian

|

||||

MAINTAINER "xingdian" <xingdian@gmail.com>

|

||||

ADD jdk-8u211-linux-x64.tar.gz /usr/local/

|

||||

RUN mv /usr/local/jdk1.8.0_211 /usr/local/java

|

||||

ENV JAVA_HOME /usr/local/java/

|

||||

ENV PATH $PATH:$JAVA_HOME/bin

|

||||

COPY tensquare_eureka_server-1.0-SNAPSHOT.jar /usr/local

|

||||

EXPOSE 10086

|

||||

CMD java -jar /usr/local/tensquare_eureka_server-1.0-SNAPSHOT.jar

|

||||

```

|

||||

|

||||

镜像构建:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor jdk]# docker build -t eureka:v2022.1 .

|

||||

```

|

||||

|

||||

上传到镜像仓库:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor jdk]# docker tag eureka:v2022.1 10.0.0.230/xingdian/eureka:v2022.1

|

||||

[root@nfs-harbor jdk]# docker push 10.0.0.230/xingdian/eureka:v2022.1

|

||||

```

|

||||

|

||||

仓库验证:

|

||||

|

||||

<img src="https://xingdian-image.oss-cn-beijing.aliyuncs.com/xingdian-image/image-20220508224930884.png" alt="image-20220508224930884" style="zoom:50%;" />

|

||||

|

||||

#### 2.tensquare_zuul部署

|

||||

|

||||

Dockerfile创建:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor jdk]# cat Dockerfile

|

||||

FROM xingdian

|

||||

MAINTAINER "xingdian" <xingdian@gmail.com>

|

||||

ADD jdk-8u211-linux-x64.tar.gz /usr/local/

|

||||

RUN mv /usr/local/jdk1.8.0_211 /usr/local/java

|

||||

ENV JAVA_HOME /usr/local/java/

|

||||

ENV PATH $PATH:$JAVA_HOME/bin

|

||||

COPY tensquare_zuul-1.0-SNAPSHOT.jar /usr/local

|

||||

EXPOSE 10020

|

||||

CMD java -jar /usr/local/tensquare_zuul-1.0-SNAPSHOT.jar

|

||||

```

|

||||

|

||||

镜像构建:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor jdk]# docker build -t zuul:v2022.1 .

|

||||

```

|

||||

|

||||

镜像上传:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor jdk]# docker tag zuul:v2022.1 10.0.0.230/xingdian/zuul:v2022.1

|

||||

[root@nfs-harbor jdk]# docker push 10.0.0.230/xingdian/zuul:v2022.1

|

||||

```

|

||||

|

||||

仓库验证:

|

||||

|

||||

<img src="https://xingdian-image.oss-cn-beijing.aliyuncs.com/xingdian-image/image-20220508230055752.png" alt="image-20220508230055752" style="zoom:50%;" />

|

||||

|

||||

注意:

|

||||

|

||||

在构建之前使用vim修改源码jar包,修改的内容如下(文件:application.yml):

|

||||

|

||||

```yml

|

||||

server:

|

||||

port: 10020 # 端口

|

||||

|

||||

# 基本服务信息

|

||||

spring:

|

||||

application:

|

||||

name: tensquare-zuul # 服务ID

|

||||

|

||||

# Eureka配置

|

||||

eureka:

|

||||

client:

|

||||

service-url:

|

||||

#defaultZone: http://192.168.66.103:10086/eureka,http://192.168.66.104:10086/eureka # Eureka访问地址

|

||||

#tensquare_eureka_server地址和端口(修改)

|

||||

defaultZone: http://10.0.0.220:30020/eureka

|

||||

instance:

|

||||

prefer-ip-address: true

|

||||

|

||||

# 修改ribbon的超时时间

|

||||

ribbon:

|

||||

ConnectTimeout: 1500 # 连接超时时间,默认500ms

|

||||

ReadTimeout: 3000 # 请求超时时间,默认1000ms

|

||||

|

||||

|

||||

# 修改hystrix的熔断超时时间

|

||||

hystrix:

|

||||

command:

|

||||

default:

|

||||

execution:

|

||||

isolation:

|

||||

thread:

|

||||

timeoutInMillisecond: 2000 # 熔断超时时长,默认1000ms

|

||||

|

||||

|

||||

# 网关路由配置

|

||||

zuul:

|

||||

routes:

|

||||

admin:

|

||||

path: /admin/**

|

||||

serviceId: tensquare-admin-service

|

||||

gathering:

|

||||

path: /gathering/**

|

||||

serviceId: tensquare-gathering

|

||||

|

||||

# jwt参数

|

||||

jwt:

|

||||

config:

|

||||

key: itcast

|

||||

ttl: 1800000

|

||||

```

|

||||

|

||||

#### 3.mysql部署

|

||||

|

||||

镜像获取(使用官方镜像):

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor mysql]# docker pull mysql:5.7.38

|

||||

```

|

||||

|

||||

镜像上传:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor mysql]# docker tag mysql:5.7.38 10.0.0.230/xingdian/mysql:v1

|

||||

[root@nfs-harbor mysql]# docker push 10.0.0.230/xingdian/mysql:v1

|

||||

```

|

||||

|

||||

#### 4.admin_service部署

|

||||

|

||||

Dockerfile创建:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor jdk]# cat Dockerfile

|

||||

FROM xingdian

|

||||

MAINTAINER "xingdian" <xingdian@gmail.com>

|

||||

ADD jdk-8u211-linux-x64.tar.gz /usr/local/

|

||||

RUN mv /usr/local/jdk1.8.0_211 /usr/local/java

|

||||

ENV JAVA_HOME /usr/local/java/

|

||||

ENV PATH $PATH:$JAVA_HOME/bin

|

||||

COPY tensquare_admin_service-1.0-SNAPSHOT.jar /usr/local

|

||||

EXPOSE 9001

|

||||

CMD java -jar /usr/local/tensquare_admin_service-1.0-SNAPSHOT.jar

|

||||

```

|

||||

|

||||

镜像构建:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor jdk]# docker build -t admin_service:v2022.1 .

|

||||

```

|

||||

|

||||

镜像上传:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor jdk]# docker tag admin_service:v2022.1 10.0.0.230/xingdian/admin_service:v2022.1

|

||||

[root@nfs-harbor jdk]# docker push 10.0.0.230/xingdian/admin_service:v2022.1

|

||||

```

|

||||

|

||||

注意:

|

||||

|

||||

在构建之前使用vim修改源码jar包,修改的内容如下(文件:application.yml):

|

||||

|

||||

```yml

|

||||

spring:

|

||||

application:

|

||||

name: tensquare-admin-service #指定服务名

|

||||

datasource:

|

||||

driverClassName: com.mysql.jdbc.Driver

|

||||

#数据库地址(修改)

|

||||

url: jdbc:mysql://10.0.0.220:30023/tensquare_user?characterEncoding=UTF8&useSSL=false

|

||||

#数据库账户名(修改)

|

||||

username: root

|

||||

#数据库账户密码(修改)

|

||||

password: mysql

|

||||

jpa:

|

||||

database: mysql

|

||||

show-sql: true

|

||||

|

||||

#Eureka配置

|

||||

eureka:

|

||||

client:

|

||||

service-url:

|

||||

#defaultZone: http://192.168.66.103:10086/eureka,http://192.168.66.104:10086/eureka

|

||||

##tensquare_eureka_server地址和端口(修改)

|

||||

defaultZone: http://10.0.0.220:30020/eureka

|

||||

instance:

|

||||

lease-renewal-interval-in-seconds: 5 # 每隔5秒发送一次心跳

|

||||

lease-expiration-duration-in-seconds: 10 # 10秒不发送就过期

|

||||

prefer-ip-address: true

|

||||

|

||||

|

||||

# jwt参数

|

||||

jwt:

|

||||

config:

|

||||

key: itcast

|

||||

ttl: 1800000

|

||||

```

|

||||

|

||||

#### 5.gathering部署

|

||||

|

||||

Dockerfile创建:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor jdk]# cat Dockerfile

|

||||

FROM xingdian

|

||||

MAINTAINER "xingdian" <xingdian@gmail.com>

|

||||

ADD jdk-8u211-linux-x64.tar.gz /usr/local/

|

||||

RUN mv /usr/local/jdk1.8.0_211 /usr/local/java

|

||||

ENV JAVA_HOME /usr/local/java/

|

||||

ENV PATH $PATH:$JAVA_HOME/bin

|

||||

COPY tensquare_gathering-1.0-SNAPSHOT.jar /usr/local

|

||||

CMD java -jar /usr/local/tensquare_gathering-1.0-SNAPSHOT.jar

|

||||

```

|

||||

|

||||

镜像构建:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor jdk]# docker build -t gathering:v2022.1 .

|

||||

```

|

||||

|

||||

镜像上传:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor jdk]# docker tag gathering:v2022.1 10.0.0.230/xingdian/gathering:v2022.1

|

||||

[root@nfs-harbor jdk]# docker push 10.0.0.230/xingdian/gathering:v2022.1

|

||||

```

|

||||

|

||||

仓库验证:

|

||||

|

||||

<img src="https://xingdian-image.oss-cn-beijing.aliyuncs.com/xingdian-image/image-20220508233621370.png" alt="image-20220508233621370" style="zoom:50%;" />

|

||||

|

||||

注意:

|

||||

|

||||

|

||||

|

||||

```yml

|

||||

server:

|

||||

port: 9002

|

||||

spring:

|

||||

application:

|

||||

name: tensquare-gathering #指定服务名

|

||||

datasource:

|

||||

driverClassName: com.mysql.jdbc.Driver

|

||||

#数据库地址(修改)

|

||||

url: jdbc:mysql://10.0.0.220:30023/tensquare_gathering?characterEncoding=UTF8&useSSL=false

|

||||

#数据库地址(修改)

|

||||

username: root

|

||||

#数据库账户密码(修改)

|

||||

password: mysql

|

||||

jpa:

|

||||

database: mysql

|

||||

show-sql: true

|

||||

#Eureka客户端配置

|

||||

eureka:

|

||||

client:

|

||||

service-url:

|

||||

#defaultZone: http://192.168.66.103:10086/eureka,http://192.168.66.104:10086/eureka

|

||||

#tensquare_eureka_server地址和端口(修改)

|

||||

defaultZone: http://10.0.0.220:30020/eureka

|

||||

instance:

|

||||

lease-renewal-interval-in-seconds: 5 # 每隔5秒发送一次心跳

|

||||

lease-expiration-duration-in-seconds: 10 # 10秒不发送就过期

|

||||

prefer-ip-address: true

|

||||

```

|

||||

|

||||

## 四:kubernetes集群部署

|

||||

|

||||

#### 1.所有镜像验证

|

||||

|

||||

|

||||

#### 2.部署eureka

|

||||

|

||||

Eureka之Deployment创建:

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# cat Eureka.yaml

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

metadata:

|

||||

name: eureka-deployment

|

||||

labels:

|

||||

app: eureka

|

||||

spec:

|

||||

replicas: 1

|

||||

selector:

|

||||

matchLabels:

|

||||

app: eureka

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

app: eureka

|

||||

spec:

|

||||

containers:

|

||||

- name: nginx

|

||||

image: 10.0.0.230/xingdian/eureka:v2022.1

|

||||

ports:

|

||||

- containerPort: 10086

|

||||

---

|

||||

apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: eureka-service

|

||||

labels:

|

||||

app: eureka

|

||||

spec:

|

||||

type: NodePort

|

||||

ports:

|

||||

- port: 10086

|

||||

name: eureka

|

||||

targetPort: 10086

|

||||

nodePort: 30020

|

||||

selector:

|

||||

app: eureka

|

||||

```

|

||||

|

||||

创建:

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl create -f Eureka.yaml

|

||||

deployment.apps/eureka-deployment created

|

||||

service/eureka-service created

|

||||

```

|

||||

|

||||

验证:

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl get pod

|

||||

NAME READY STATUS RESTARTS AGE

|

||||

eureka-deployment-69c575d95-hx8s6 1/1 Running 0 2m20s

|

||||

[root@master xingdian]# kubectl get svc

|

||||

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

|

||||

eureka-service NodePort 10.107.243.240 <none> 10086:30020/TCP 2m22s

|

||||

```

|

||||

|

||||

<img src="https://xingdian-image.oss-cn-beijing.aliyuncs.com/xingdian-image/image-20220508235409218.png" alt="image-20220508235409218" style="zoom:50%;" />

|

||||

|

||||

#### 3.部署zuul

|

||||

|

||||

zuul之Deployment创建:

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# cat Zuul.yaml

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

metadata:

|

||||

name: zuul-deployment

|

||||

labels:

|

||||

app: zuul

|

||||

spec:

|

||||

replicas: 1

|

||||

selector:

|

||||

matchLabels:

|

||||

app: zuul

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

app: zuul

|

||||

spec:

|

||||

containers:

|

||||

- name: zuul

|

||||

image: 10.0.0.230/xingdian/zuul:v2022.1

|

||||

ports:

|

||||

- containerPort: 10020

|

||||

---

|

||||

apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: zuul-service

|

||||

labels:

|

||||

app: zuul

|

||||

spec:

|

||||

type: NodePort

|

||||

ports:

|

||||

- port: 10020

|

||||

name: zuul

|

||||

targetPort: 10086

|

||||

nodePort: 30021

|

||||

selector:

|

||||

app: zuul

|

||||

```

|

||||

|

||||

创建:

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl create -f Zuul.yaml

|

||||

```

|

||||

|

||||

验证:

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl get pod

|

||||

NAME READY STATUS RESTARTS AGE

|

||||

eureka-deployment-69c575d95-hx8s6 1/1 Running 0 7m42s

|

||||

zuul-deployment-6d76647cf9-6rmdj 1/1 Running 0 10s

|

||||

[root@master xingdian]# kubectl get svc

|

||||

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

|

||||

eureka-service NodePort 10.107.243.240 <none> 10086:30020/TCP 7m37s

|

||||

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 11d

|

||||

zuul-service NodePort 10.103.35.255 <none> 10020:30021/TCP 5s

|

||||

```

|

||||

|

||||

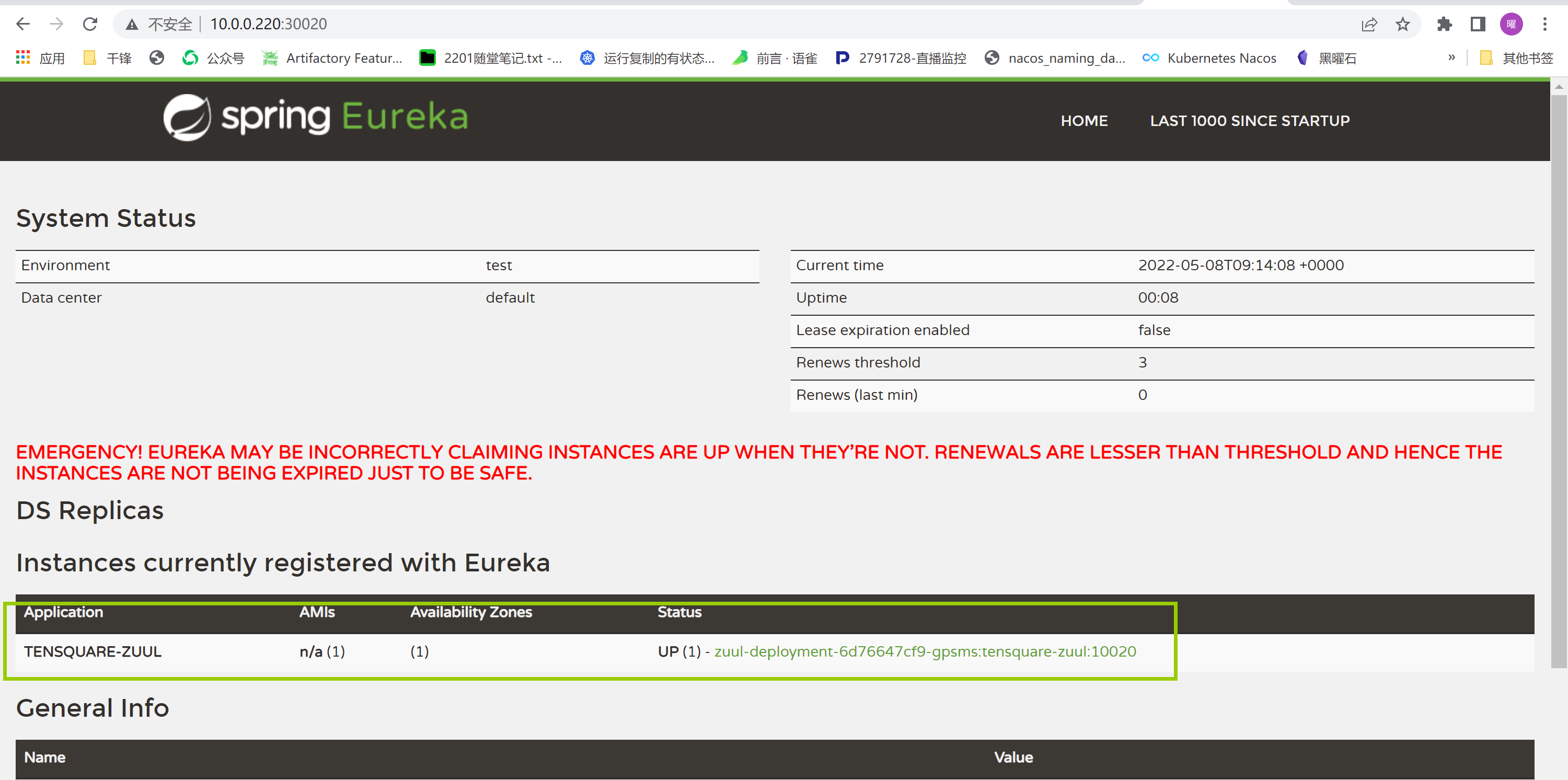

验证是否加入注册中心:

|

||||

|

||||

|

||||

|

||||

#### 4.mysql部署

|

||||

|

||||

mysql之rc和svc创建:

|

||||

|

||||

```shell

|

||||

[root@master mysql]# cat mysql-svc.yaml

|

||||

apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: mysql-svc

|

||||

labels:

|

||||

name: mysql-svc

|

||||

spec:

|

||||

type: NodePort

|

||||

ports:

|

||||

- port: 3306

|

||||

protocol: TCP

|

||||

targetPort: 3306

|

||||

name: http

|

||||

nodePort: 30023

|

||||

selector:

|

||||

name: mysql-pod

|

||||

[root@master mysql]# cat mysql-rc.yaml

|

||||

apiVersion: v1

|

||||

kind: ReplicationController

|

||||

metadata:

|

||||

name: mysql-rc

|

||||

labels:

|

||||

name: mysql-rc

|

||||

spec:

|

||||

replicas: 1

|

||||

selector:

|

||||

name: mysql-pod

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

name: mysql-pod

|

||||

spec:

|

||||

containers:

|

||||

- name: mysql

|

||||

image: 10.0.0.230/xingdian/mysql:v1

|

||||

imagePullPolicy: IfNotPresent

|

||||

ports:

|

||||

- containerPort: 3306

|

||||

env:

|

||||

- name: MYSQL_ROOT_PASSWORD

|

||||

value: "mysql"

|

||||

```

|

||||

|

||||

创建:

|

||||

|

||||

```shell

|

||||

[root@master mysql]# kubectl create -f mysql-rc.yaml

|

||||

replicationcontroller/mysql-rc created

|

||||

[root@master mysql]# kubectl create -f mysql-svc.yaml

|

||||

service/mysql-svc created

|

||||

```

|

||||

|

||||

验证:

|

||||

|

||||

```shell

|

||||

[root@master mysql]# kubectl get pod

|

||||

NAME READY STATUS RESTARTS AGE

|

||||

eureka-deployment-69c575d95-hx8s6 1/1 Running 0 29m

|

||||

mysql-rc-sbdcl 1/1 Running 0 8m41s

|

||||

zuul-deployment-6d76647cf9-gpsms 1/1 Running 0 21m

|

||||

[root@master mysql]# kubectl get svc

|

||||

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

|

||||

eureka-service NodePort 10.107.243.240 <none> 10086:30020/TCP 29m

|

||||

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 11d

|

||||

mysql-svc NodePort 10.98.4.62 <none> 3306:30023/TCP 9m1s

|

||||

zuul-service NodePort 10.103.35.255 <none> 10020:30021/TCP 22m

|

||||

```

|

||||

|

||||

数据库创建:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor ~]# mysql -u root -pmysql -h 10.0.0.220 -P 30023

|

||||

Welcome to the MariaDB monitor. Commands end with ; or \g.

|

||||

Your MySQL connection id is 2

|

||||

Server version: 5.7.38 MySQL Community Server (GPL)

|

||||

|

||||

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

|

||||

|

||||

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

|

||||

|

||||

MySQL [(none)]> create database tensquare_user charset=utf8;

|

||||

Query OK, 1 row affected (0.00 sec)

|

||||

|

||||

MySQL [(none)]> create database tensquare_gathering charset=utf8;

|

||||

Query OK, 1 row affected (0.01 sec)

|

||||

|

||||

MySQL [(none)]> exit

|

||||

Bye

|

||||

```

|

||||

|

||||

数据导入:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor ~]# mysql -u root -pmysql -h 10.0.0.220 -P 30023

|

||||

Welcome to the MariaDB monitor. Commands end with ; or \g.

|

||||

Your MySQL connection id is 3

|

||||

Server version: 5.7.38 MySQL Community Server (GPL)

|

||||

|

||||

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

|

||||

|

||||

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

|

||||

|

||||

MySQL [(none)]> source /var/ftp/share/tensquare_user.sql

|

||||

|

||||

MySQL [tensquare_user]> source /var/ftp/share/tensquare_gathering.sql

|

||||

|

||||

MySQL [tensquare_gathering]> exit

|

||||

Bye

|

||||

```

|

||||

|

||||

验证:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor ~]# mysql -u root -pmysql -h 10.0.0.220 -P 30023

|

||||

Welcome to the MariaDB monitor. Commands end with ; or \g.

|

||||

Your MySQL connection id is 3

|

||||

Server version: 5.7.38 MySQL Community Server (GPL)

|

||||

|

||||

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

|

||||

|

||||

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

|

||||

|

||||

MySQL [(none)]> show databases;

|

||||

+---------------------+

|

||||

| Database |

|

||||

+---------------------+

|

||||

| information_schema |

|

||||

| mysql |

|

||||

| performance_schema |

|

||||

| sys |

|

||||

| tensquare_gathering |

|

||||

| tensquare_user |

|

||||

+---------------------+

|

||||

6 rows in set (0.00 sec)

|

||||

|

||||

MySQL [(none)]> use tensquare_gathering

|

||||

Reading table information for completion of table and column names

|

||||

You can turn off this feature to get a quicker startup with -A

|

||||

|

||||

Database changed

|

||||

MySQL [tensquare_gathering]> show tables;

|

||||

+-------------------------------+

|

||||

| Tables_in_tensquare_gathering |

|

||||

+-------------------------------+

|

||||

| tb_city |

|

||||

| tb_gathering |

|

||||

+-------------------------------+

|

||||

2 rows in set (0.00 sec)

|

||||

|

||||

MySQL [tensquare_gathering]> use tensquare_user

|

||||

Reading table information for completion of table and column names

|

||||

You can turn off this feature to get a quicker startup with -A

|

||||

|

||||

Database changed

|

||||

MySQL [tensquare_user]> show tables;

|

||||

+--------------------------+

|

||||

| Tables_in_tensquare_user |

|

||||

+--------------------------+

|

||||

| tb_admin |

|

||||

+--------------------------+

|

||||

1 row in set (0.01 sec)

|

||||

```

|

||||

|

||||

#### 5.admin_service部署

|

||||

|

||||

admin_service之Deployment创建:

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# cat Admin-service.yaml

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

metadata:

|

||||

name: admin-deployment

|

||||

labels:

|

||||

app: admin

|

||||

spec:

|

||||

replicas: 1

|

||||

selector:

|

||||

matchLabels:

|

||||

app: admin

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

app: admin

|

||||

spec:

|

||||

containers:

|

||||

- name: admin

|

||||

image: 10.0.0.230/xingdian/admin_service:v2022.1

|

||||

ports:

|

||||

- containerPort: 9001

|

||||

---

|

||||

apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: admin-service

|

||||

labels:

|

||||

app: admin

|

||||

spec:

|

||||

type: NodePort

|

||||

ports:

|

||||

- port: 9001

|

||||

name: admin

|

||||

targetPort: 9001

|

||||

nodePort: 30024

|

||||

selector:

|

||||

app: admin

|

||||

```

|

||||

|

||||

创建:

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl create -f Admin-service.yaml

|

||||

deployment.apps/admin-deployment created

|

||||

service/admin-service created

|

||||

```

|

||||

|

||||

验证:

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl get pod

|

||||

NAME READY STATUS RESTARTS AGE

|

||||

admin-deployment-54c5664d69-l2lbc 1/1 Running 0 23s

|

||||

eureka-deployment-69c575d95-mrj66 1/1 Running 0 47m

|

||||

mysql-rc-zgxk4 1/1 Running 0 7m23s

|

||||

zuul-deployment-6d76647cf9-gpsms 1/1 Running 0 39m

|

||||

[root@master xingdian]# kubectl get svc

|

||||

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

|

||||

admin-service NodePort 10.101.251.47 <none> 9001:30024/TCP 6s

|

||||

eureka-service NodePort 10.107.243.240 <none> 10086:30020/TCP 47m

|

||||

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 11d

|

||||

mysql-svc NodePort 10.98.4.62 <none> 3306:30023/TCP 26m

|

||||

zuul-service NodePort 10.103.35.255 <none> 10020:30021/TCP 39m

|

||||

```

|

||||

|

||||

注册中心验证:

|

||||

|

||||

<img src="https://xingdian-image.oss-cn-beijing.aliyuncs.com/xingdian-image/image-20220509013257937.png" alt="image-20220509013257937" style="zoom:50%;" />

|

||||

|

||||

#### 6.gathering部署

|

||||

|

||||

gathering之Deployment创建:

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# cat Gathering.yaml

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

metadata:

|

||||

name: gathering-deployment

|

||||

labels:

|

||||

app: gathering

|

||||

spec:

|

||||

replicas: 1

|

||||

selector:

|

||||

matchLabels:

|

||||

app: gathering

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

app: gathering

|

||||

spec:

|

||||

containers:

|

||||

- name: nginx

|

||||

image: 10.0.0.230/xingdian/gathering:v2022.1

|

||||

ports:

|

||||

- containerPort: 9002

|

||||

---

|

||||

apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: gathering-service

|

||||

labels:

|

||||

app: gathering

|

||||

spec:

|

||||

type: NodePort

|

||||

ports:

|

||||

- port: 9002

|

||||

name: gathering

|

||||

targetPort: 9002

|

||||

nodePort: 30022

|

||||

selector:

|

||||

app: gathering

|

||||

```

|

||||

|

||||

创建:

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl create -f Gathering.yaml

|

||||

deployment.apps/gathering-deployment created

|

||||

service/gathering-service created

|

||||

```

|

||||

|

||||

验证:

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl get pod

|

||||

NAME READY STATUS RESTARTS AGE

|

||||

admin-deployment-54c5664d69-2tqlw 1/1 Running 0 33s

|

||||

eureka-deployment-69c575d95-xzx9t 1/1 Running 0 13m

|

||||

gathering-deployment-6fcdd5d5-wbsxt 1/1 Running 0 27s

|

||||

mysql-rc-zgxk4 1/1 Running 0 28m

|

||||

zuul-deployment-6d76647cf9-jkm7f 1/1 Running 0 12m

|

||||

```

|

||||

|

||||

注册中心验证:

|

||||

|

||||

<img src="https://xingdian-image.oss-cn-beijing.aliyuncs.com/xingdian-image/image-20220509005823566.png" alt="image-20220509005823566" style="zoom:50%;" />

|

||||

|

||||

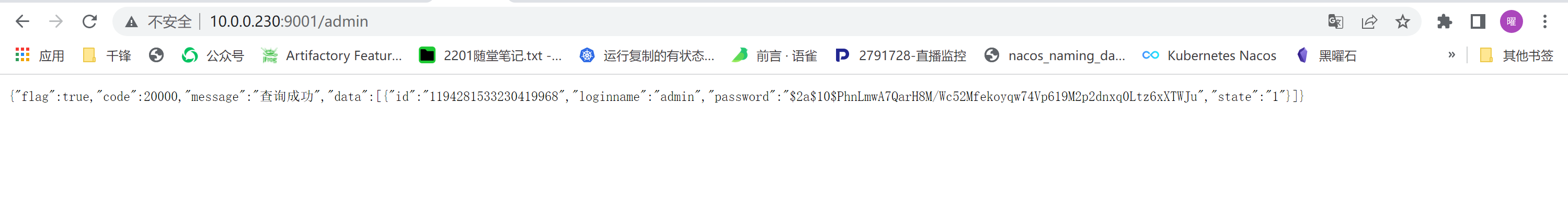

#### 7.浏览器测试API接口

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

|

@ -0,0 +1,335 @@

|

|||

<h1><center>利用kubernetes部署网站项目</center></h1>

|

||||

|

||||

|

||||

|

||||

------

|

||||

|

||||

## 一:环境准备

|

||||

|

||||

#### 1.kubernetes集群

|

||||

|

||||

集群正常运行,例如使用以下命令检查

|

||||

|

||||

```shell

|

||||

[root@master ~]# kubectl get node

|

||||

NAME STATUS ROLES AGE VERSION

|

||||

master Ready control-plane,master 5d19h v1.23.1

|

||||

node-1 Ready <none> 5d19h v1.23.1

|

||||

node-2 Ready <none> 5d19h v1.23.1

|

||||

node-3 Ready <none> 5d19h v1.23.1

|

||||

```

|

||||

|

||||

#### 2.harbor私有仓库

|

||||

|

||||

主要给kubernetes集群提供镜像服务

|

||||

|

||||

<img src="https://xingdian-image.oss-cn-beijing.aliyuncs.com/xingdian-image/image-20220502184026483.png" alt="image-20220502184026483" style="zoom:50%;" />

|

||||

## 二:项目部署

|

||||

|

||||

#### 1.镜像构建

|

||||

|

||||

软件下载地址:

|

||||

|

||||

```shell

|

||||

wget https://nginx.org/download/nginx-1.20.2.tar.gz

|

||||

```

|

||||

|

||||

项目包下载地址:

|

||||

|

||||

```shell

|

||||

git clone https://github.com/blackmed/xingdian-project.git

|

||||

```

|

||||

|

||||

构建centos基础镜像Dockerfile文件:

|

||||

|

||||

```shell

|

||||

root@nfs-harbor ~]# cat Dockerfile

|

||||

FROM daocloud.io/centos:7

|

||||

MAINTAINER "xingdianvip@gmail.com"

|

||||

ENV container docker

|

||||

RUN yum -y swap -- remove fakesystemd -- install systemd systemd-libs

|

||||

RUN yum -y update; yum clean all; \

|

||||

(cd /lib/systemd/system/sysinit.target.wants/; for i in *; do [ $i == systemd-tmpfiles-setup.service ] || rm -f $i; done); \

|

||||

rm -f /lib/systemd/system/multi-user.target.wants/*;\

|

||||

rm -f /etc/systemd/system/*.wants/*;\

|

||||

rm -f /lib/systemd/system/local-fs.target.wants/*; \

|

||||

rm -f /lib/systemd/system/sockets.target.wants/*udev*; \

|

||||

rm -f /lib/systemd/system/sockets.target.wants/*initctl*; \

|

||||

rm -f /lib/systemd/system/basic.target.wants/*;\

|

||||

rm -f /lib/systemd/system/anaconda.target.wants/*;

|

||||

VOLUME [ "/sys/fs/cgroup" ]

|

||||

CMD ["/usr/sbin/init"]

|

||||

root@nfs-harbor ~]# docker bulid -t xingdian .

|

||||

```

|

||||

|

||||

构建项目镜像:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor nginx]# cat Dockerfile

|

||||

FROM xingdian

|

||||

ADD nginx-1.20.2.tar.gz /usr/local

|

||||

RUN rm -rf /etc/yum.repos.d/*

|

||||

COPY CentOS-Base.repo /etc/yum.repos.d/

|

||||

COPY epel.repo /etc/yum.repos.d/

|

||||

RUN yum clean all && yum makecache fast

|

||||

RUN yum -y install gcc gcc-c++ openssl openssl-devel pcre-devel zlib-devel make

|

||||

WORKDIR /usr/local/nginx-1.20.2

|

||||

RUN ./configure --prefix=/usr/local/nginx

|

||||

RUN make && make install

|

||||

WORKDIR /usr/local/nginx

|

||||

ENV PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/local/nginx/sbin

|

||||

EXPOSE 80

|

||||

RUN rm -rf /usr/local/nginx/conf/nginx.conf

|

||||

COPY nginx.conf /usr/local/nginx/conf/

|

||||

RUN mkdir /dist

|

||||

CMD ["nginx", "-g", "daemon off;"]

|

||||

[root@nfs-harbor nginx]# docker build -t nginx:v2 .

|

||||

```

|

||||

|

||||

注意:

|

||||

|

||||

需要事先准备好Centos的Base仓库和epel仓库

|

||||

|

||||

#### 2.上传项目到harbor

|

||||

|

||||

修改镜像tag:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor ~]# docker tag nginx:v2 10.0.0.230/xingdian/nginx:v2

|

||||

```

|

||||

|

||||

登录私有仓库:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor ~]# docker login 10.0.0.230

|

||||

Username: xingdian

|

||||

Password:

|

||||

```

|

||||

|

||||

上传镜像:

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor ~]# docker push 10.0.0.230/xingdian/nginx:v2

|

||||

```

|

||||

|

||||

注意:

|

||||

|

||||

默认上传时采用https,因为我们部署的harbor使用的是http,所以再上传之前按照3-1进行修改

|

||||

|

||||

#### 3.kubernetes集群连接harbor

|

||||

|

||||

修改所有kubernetes集群能够访问http仓库,默认访问的是https

|

||||

|

||||

```shell

|

||||

[root@master ~]# vim /etc/systemd/system/multi-user.target.wants/docker.service

|

||||

ExecStart=/usr/bin/dockerd -H fd:// --insecure-registry 10.0.1.13 --containerd=/run/containerd/containerd.sock

|

||||

[root@master ~]# systemctl daemon-reload

|

||||

[root@master ~]# systemctl restart docker

|

||||

```

|

||||

|

||||

kubernetes集群创建secret用于连接harbor

|

||||

|

||||

```shell

|

||||

[root@master ~]# kubectl create secret docker-registry regcred --docker-server=10.0.0.230 --docker-username=diange --docker-password=QianFeng@123

|

||||

[root@master ~]# kubectl get secret

|

||||

NAME TYPE DATA AGE

|

||||

regcred kubernetes.io/dockerconfigjson 1 19h

|

||||

```

|

||||

|

||||

注意:

|

||||

|

||||

regcred:secret的名字

|

||||

|

||||

--docker-server:指定服务器的地址

|

||||

|

||||

--docker-username:指定harbor的用户

|

||||

|

||||

--docker-password:指定harbor的密码

|

||||

|

||||

#### 4.部署NFS

|

||||

|

||||

部署NFS目的是为了给kubernetes集群提供持久化存储,kubernetes集群也要安装nfs-utils目的是为了支持nfs文件系统

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor ~]# yum -y install nfs-utils

|

||||

[root@nfs-harbor ~]# systemctl start nfs

|

||||

[root@nfs-harbor ~]# systemctl enable nfs

|

||||

```

|

||||

|

||||

创建共享目录并对外共享

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor ~]# mkdir /kubernetes-1

|

||||

[root@nfs-harbor ~]# cat /etc/exports

|

||||

/kubernetes-1 *(rw,no_root_squash,sync)

|

||||

[root@nfs-harbor ~]# exportfs -rv

|

||||

```

|

||||

|

||||

项目放入共享目录下

|

||||

|

||||

```shell

|

||||

[root@nfs-harbor ~]# git clone https://github.com/blackmed/xingdian-project.git

|

||||

[root@nfs-harbor ~]# unzip dist.zip

|

||||

[root@nfs-harbor ~]# cp -r dist/* /kubernetes-1

|

||||

```

|

||||

|

||||

#### 5.创建statefulset部署项目

|

||||

|

||||

该yaml文件中除了statefulset以外还有service、PersistentVolume、StorageClass

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# cat Statefulset.yaml

|

||||

apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: nginx

|

||||

labels:

|

||||

app: nginx

|

||||

spec:

|

||||

type: NodePort

|

||||

ports:

|

||||

- port: 80

|

||||

name: web

|

||||

targetPort: 80

|

||||

nodePort: 30010

|

||||

selector:

|

||||

app: nginx

|

||||

---

|

||||

apiVersion: storage.k8s.io/v1

|

||||

kind: StorageClass

|

||||

metadata:

|

||||

name: xingdian

|

||||

provisioner: example.com/external-nfs

|

||||

parameters:

|

||||

server: 10.0.0.230

|

||||

path: /kubernetes-1

|

||||

readOnly: "false"

|

||||

---

|

||||

apiVersion: v1

|

||||

kind: PersistentVolume

|

||||

metadata:

|

||||

name: xingdian-1

|

||||

spec:

|

||||

capacity:

|

||||

storage: 1Gi

|

||||

volumeMode: Filesystem

|

||||

accessModes:

|

||||

- ReadWriteOnce

|

||||

storageClassName: xingdian

|

||||

nfs:

|

||||

path: /kubernetes-1

|

||||

server: 10.0.0.230

|

||||

---

|

||||

apiVersion: v1

|

||||

kind: PersistentVolume

|

||||

metadata:

|

||||

name: xingdian-2

|

||||

spec:

|

||||

capacity:

|

||||

storage: 1Gi

|

||||

volumeMode: Filesystem

|

||||

accessModes:

|

||||

- ReadWriteOnce

|

||||

storageClassName: xingdian

|

||||

nfs:

|

||||

path: /kubernetes-1

|

||||

server: 10.0.0.230

|

||||

---

|

||||

apiVersion: apps/v1

|

||||

kind: StatefulSet

|

||||

metadata:

|

||||

name: web

|

||||

spec:

|

||||

selector:

|

||||

matchLabels:

|

||||

app: nginx

|

||||

serviceName: "nginx"

|

||||

replicas: 2

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

app: nginx

|

||||

spec:

|

||||

terminationGracePeriodSeconds: 10

|

||||

containers:

|

||||

- name: nginx

|

||||

image: 10.0.0.230/xingdian/nginx:v2

|

||||

ports:

|

||||

- containerPort: 80

|

||||

name: web

|

||||

volumeMounts:

|

||||

- name: www

|

||||

mountPath: /dist

|

||||

volumeClaimTemplates:

|

||||

- metadata:

|

||||

name: www

|

||||

spec:

|

||||

accessModes: [ "ReadWriteOnce" ]

|

||||

storageClassName: "xingdian"

|

||||

resources:

|

||||

requests:

|

||||

storage: 1Gi

|

||||

```

|

||||

|

||||

#### 6.运行

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl create -f Statefulset.yaml

|

||||

service/nginx created

|

||||

storageclass.storage.k8s.io/xingdian created

|

||||

persistentvolume/xingdian-1 created

|

||||

persistentvolume/xingdian-2 created

|

||||

statefulset.apps/web created

|

||||

```

|

||||

|

||||

## 三:项目验证

|

||||

|

||||

#### 1.pv验证

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl get pv

|

||||

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

|

||||

xingdian-1 1Gi RWO Retain Bound default/www-web-1 xingdian 9m59s

|

||||

xingdian-2 1Gi RWO Retain Bound default/www-web-0 xingdian 9m59s

|

||||

```

|

||||

|

||||

#### 2.pvc验证

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl get pvc

|

||||

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

|

||||

www-web-0 Bound xingdian-2 1Gi RWO xingdian 10m

|

||||

www-web-1 Bound xingdian-1 1Gi RWO xingdian 10m

|

||||

```

|

||||

|

||||

#### 3.storageClass验证

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl get storageclass

|

||||

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

|

||||

xingdian example.com/external-nfs Delete Immediate false 10m

|

||||

```

|

||||

|

||||

#### 4.statefulset验证

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl get statefulset

|

||||

NAME READY AGE

|

||||

web 2/2 13m

|

||||

[root@master xingdian]# kubectl get pod

|

||||

NAME READY STATUS RESTARTS AGE

|

||||

web-0 1/1 Running 0 13m

|

||||

web-1 1/1 Running 0 13m

|

||||

```

|

||||

|

||||

#### 5.service验证

|

||||

|

||||

```shell

|

||||

[root@master xingdian]# kubectl get svc

|

||||

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

|

||||

nginx NodePort 10.111.189.32 <none> 80:30010/TCP 13m

|

||||

```

|

||||

|

||||

#### 6.浏览器访问

|

||||

|

||||

<img src="https://xingdian-image.oss-cn-beijing.aliyuncs.com/xingdian-image/image-20220502193031689.png" alt="image-20220502193031689" style="zoom:80%;" />

|

||||

|

|

@ -0,0 +1,314 @@

|

|||

<h1><center>基于Kubernetes集群构建ES集群</center></h1>

|

||||

|

||||

|

||||

|

||||

------

|

||||

|

||||

## 一:环境准备

|

||||

|

||||

#### 1.Kubernetes集群环境

|

||||

|

||||

| 节点 | 地址 |

|

||||

| :---------------: | :---------: |

|

||||

| Kubernetes-Master | 10.9.12.206 |

|

||||

| Kubernetes-Node-1 | 10.9.12.205 |

|

||||

| Kubernetes-Node-2 | 10.9.12.204 |

|

||||

| Kubernetes-Node-3 | 10.9.12.203 |

|

||||

| DNS服务器 | 10.9.12.210 |

|

||||

| 代理服务器 | 10.9.12.209 |

|

||||

| NFS存储 | 10.9.12.250 |

|

||||

|

||||

#### 2.Kuboard集群管理

|

||||

|

||||

|

||||

|

||||

## 二:构建ES集群

|

||||

|

||||

#### 1.持久化存储构建

|

||||

|

||||

1.NFS服务器部署

|

||||

|

||||

略

|

||||

|

||||

2.创建共享目录

|

||||

|

||||

本次采用脚本创建,脚本如下

|

||||

|

||||

```shell

|

||||

[root@xingdiancloud-1 ~]# cat nfs.sh

|

||||

#!/bin/bash

|

||||

read -p "请输入您要创建的共享目录:" dir

|

||||

if [ -d $dir ];then

|

||||

echo "请重新输入共享目录: "

|

||||

read again_dir

|

||||

mkdir $again_dir -p

|

||||

echo "共享目录创建成功"

|

||||

read -p "请输入共享对象:" ips

|

||||

echo "$again_dir ${ips}(rw,sync,no_root_squash)" >> /etc/exports

|

||||

xingdian=`cat /etc/exports |grep "$again_dir" |wc -l`

|

||||

if [ $xingdian -eq 1 ];then

|

||||

echo "成功配置共享"

|

||||

exportfs -rv >/dev/null

|

||||

exit

|

||||

else

|

||||

exit

|

||||

fi

|

||||

else

|

||||

mkdir $dir -p

|

||||

echo "共享目录创建成功"

|

||||

read -p "请输入共享对象:" ips

|

||||

echo "$dir ${ips}(rw,sync,no_root_squash)" >> /etc/exports

|

||||

xingdian=`cat /etc/exports |grep "$dir" |wc -l`

|

||||

if [ $xingdian -eq 1 ];then

|

||||

echo "成功配置共享"

|

||||

exportfs -rv >/dev/null

|

||||

exit

|

||||

else

|

||||

exit

|

||||

fi

|

||||

fi

|

||||

```

|

||||

|

||||

3.创建存储类

|

||||

|

||||

```yaml

|

||||

[root@xingdiancloud-master ~]# vim namespace.yaml

|

||||

apiVersion: v1

|

||||

kind: Namespace

|

||||

metadata:

|

||||

name: logging

|

||||

[root@xingdiancloud-master ~]# vim storageclass.yaml

|

||||

apiVersion: storage.k8s.io/v1

|

||||

kind: StorageClass

|

||||

metadata:

|

||||

annotations:

|

||||

k8s.kuboard.cn/storageNamespace: logging

|

||||

k8s.kuboard.cn/storageType: nfs_client_provisioner

|

||||

name: data-es

|

||||

parameters:

|

||||

archiveOnDelete: 'false'

|

||||

provisioner: nfs-data-es

|

||||

reclaimPolicy: Retain

|

||||

volumeBindingMode: Immediate

|

||||

```

|

||||

|

||||

4.创建存储卷

|

||||

|

||||

```yaml

|

||||

[root@xingdiancloud-master ~]# vim persistenVolume.yaml

|

||||

apiVersion: v1

|

||||

kind: PersistentVolume

|

||||

metadata:

|

||||

annotations:

|

||||

pv.kubernetes.io/bound-by-controller: 'yes'

|

||||

finalizers:

|

||||

- kubernetes.io/pv-protection

|

||||

name: nfs-pv-data-es

|

||||

spec:

|

||||

accessModes:

|

||||

- ReadWriteMany

|

||||

capacity:

|

||||

storage: 100Gi

|

||||

claimRef:

|

||||

apiVersion: v1

|

||||

kind: PersistentVolumeClaim

|

||||

name: nfs-pvc-data-es

|

||||

namespace: kube-system

|

||||

nfs:

|

||||

path: /data/es-data

|

||||

server: 10.9.12.250

|

||||

persistentVolumeReclaimPolicy: Retain

|

||||

storageClassName: nfs-storageclass-provisioner

|

||||

volumeMode: Filesystem

|

||||

```

|

||||

|

||||

注意:存储类和存储卷也可以使用Kuboard界面创建

|

||||

|

||||

#### 2.设定节点标签

|

||||

|

||||

```shell

|

||||

[root@xingdiancloud-master ~]# kubectl label nodes xingdiancloud-node-1 es=log

|

||||

```

|

||||

|

||||

注意:

|

||||

|

||||

所有运行ES的节点需要进行标签的设定

|

||||

|

||||

目的配合接下来的StatefulSet部署ES集群

|

||||

|

||||

#### 3.ES集群部署

|

||||

|

||||

注意:由于ES集群每个节点需要唯一的网络标识,并需要持久化存储,Deployment不能实现该特点只能进行无状态应用的部署,故本次将采用StatefulSet进行部署。

|

||||

|

||||

```yaml

|

||||

apiVersion: apps/v1

|

||||

kind: StatefulSet

|

||||

metadata:

|

||||

name: es

|

||||

namespace: logging

|

||||

spec:

|

||||

serviceName: elasticsearch

|

||||

replicas: 3

|

||||

selector:

|

||||

matchLabels:

|

||||

app: elasticsearch

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

app: elasticsearch

|

||||

spec:

|

||||

nodeSelector:

|

||||

es: log

|

||||

initContainers:

|

||||

- name: increase-vm-max-map

|

||||

image: busybox

|

||||

command: ["sysctl", "-w", "vm.max_map_count=262144"]

|

||||

securityContext:

|

||||

privileged: true

|

||||

- name: increase-fd-ulimit

|

||||

image: busybox

|

||||

command: ["sh", "-c", "ulimit -n 65536"]

|

||||

securityContext:

|

||||

privileged: true

|

||||

containers:

|

||||

- name: elasticsearch

|

||||

image: docker.elastic.co/elasticsearch/elasticsearch:7.6.2

|

||||

ports:

|

||||

- name: rest

|

||||

containerPort: 9200

|

||||

- name: inter

|

||||

containerPort: 9300

|

||||

resources:

|

||||

limits:

|

||||

cpu: 500m

|

||||

memory: 4000Mi

|

||||

requests:

|

||||

cpu: 500m

|

||||

memory: 3000Mi

|

||||

volumeMounts:

|

||||

- name: data

|

||||

mountPath: /usr/share/elasticsearch/data

|

||||

env:

|

||||

- name: cluster.name

|

||||

value: k8s-logs

|

||||

- name: node.name

|

||||

valueFrom:

|

||||

fieldRef:

|

||||

fieldPath: metadata.name

|

||||

- name: cluster.initial_master_nodes

|

||||

value: "es-0,es-1,es-2"

|

||||

- name: discovery.zen.minimum_master_nodes

|

||||

value: "2"

|

||||

- name: discovery.seed_hosts

|

||||

value: "elasticsearch"

|

||||

- name: ESJAVAOPTS

|

||||

value: "-Xms512m -Xmx512m"

|

||||

- name: network.host

|

||||

value: "0.0.0.0"

|

||||

- name: node.max_local_storage_nodes

|

||||

value: "3"

|

||||

volumeClaimTemplates:

|

||||

- metadata:

|

||||

name: data

|

||||

labels:

|

||||

app: elasticsearch

|

||||

spec:

|

||||

accessModes: [ "ReadWriteMany" ]

|

||||

storageClassName: data-es

|

||||

resources:

|

||||

requests:

|

||||

storage: 25Gi

|

||||

```

|

||||

|

||||

#### 4.创建Services发布ES集群

|

||||

|

||||

```yaml

|

||||

[root@xingdiancloud-master ~]# vim elasticsearch-svc.yaml

|

||||

kind: Service

|

||||

apiVersion: v1

|

||||

metadata:

|

||||

name: elasticsearch

|

||||

namespace: logging

|

||||

labels:

|

||||

app: elasticsearch

|

||||

spec:

|

||||

selector:

|

||||

app: elasticsearch

|

||||

type: NodePort

|

||||

ports:

|

||||

- port: 9200

|

||||

targetPort: 9200

|

||||

nodePort: 30010

|

||||

name: rest

|

||||

- port: 9300

|

||||

name: inter-node

|

||||

```

|

||||

|

||||

#### 5.访问测试

|

||||

|

||||

注意:

|

||||

|

||||

使用elasticVUE插件访问集群

|

||||

|

||||

集群状态正常

|

||||

|

||||

集群所有节点正常

|

||||

|

||||

|

||||

|

||||

## 三:代理及DNS配置

|

||||

|

||||

#### 1.代理配置

|

||||

|

||||

注意:

|

||||

|

||||

部署略

|

||||

|

||||

在此使用Nginx作为代理

|

||||

|

||||

基于用户的访问控制用户和密码自行创建(htpasswd)

|

||||

|

||||

配置文件如下

|

||||

|

||||

```shell

|

||||

[root@proxy ~]# cat /etc/nginx/conf.d/elasticsearch.conf

|

||||

server {

|

||||

listen 80;

|

||||

server_name es.xingdian.com;

|

||||

location / {

|

||||

auth_basic "xingdiancloud kibana";

|

||||

auth_basic_user_file /etc/nginx/pass;

|

||||

proxy_pass http://地址+端口;

|

||||

|

||||

}

|

||||

|

||||

|

||||

}

|

||||

```

|

||||

|

||||

#### 2.域名解析配置

|

||||

|

||||

注意:

|

||||

|

||||

部署略

|

||||

|

||||

配置如下

|

||||

|

||||

```shell

|

||||

[root@www ~]# cat /var/named/xingdian.com.zone

|

||||

$TTL 1D

|

||||

@ IN SOA @ rname.invalid. (

|

||||

0 ; serial

|

||||

1D ; refresh

|

||||

1H ; retry

|

||||

1W ; expire

|

||||

3H ) ; minimum

|

||||

NS @

|

||||

A DNS地址

|

||||

es A 代理地址

|

||||

AAAA ::1

|

||||

```

|

||||

|

||||

#### 3.访问测试

|

||||

|

||||

略

|

||||

Loading…

Reference in New Issue